It’s becoming more and more clearly recognized that the promises of the machine-learning revolution, at least for your average non-big-tech company, are not delivering the value that many had hoped for. That is, many executives are looking at the fleet of data scientists they hired and not seeing the return on investment they were hoping for. Here’s a quote from one of many articles discussing the problem:

Seven out of 10 executives whose companies had made investments in artificial intelligence (AI) said they had seen minimal or no impact from them, according to the 2019 MIT SMR-BCG Artificial Intelligence Global Executive Study and Research Report .

Beyond the fact that a lot of snake-oil salespeople over-sold the capabilities of AI in general, I believe that we data scientists, as a profession, have focused too much on algorithms and methods, and not enough on how to deliver business value.

We can deliver more value as data scientists by adopting a lean data science methodology that emphasizes experimentation and iteration at the product level instead of focusing on measures of model performance not directly tied to business value.

Lean Data Science

Lean Data Science is based in large part on the methodologies discussed in The Lean Startup. Data science projects in organizations of all sizes are a lot like startups: they’re highly uncertain, and they require a lot of upfront investment to build. Some might even say they’re circular rather than linear.

That is, they’re highly uncertain because we are often working at the frontier of what’s possible — before embarking on a new data science project, we might not know if the data we need are available, or if even the state-of-the-art models will provide adequate performance. And, even if the data exist and the model is performant, we might not know if we can actually change customer behavior in the way we want. Unlike traditional software engineering where features can be well-scoped in advance and estimated with at least a reasonable degree of uncertainty, with data science projects we are often embarking on projects where even if we do everything correctly, success is far from guaranteed.

Of course, data science projects also tend to require a lot of up-front investment to get off the ground. Data scientists are expensive, and, anecdotally, I’ve spoken with many data scientists who might spend many months working on a project before it sees the light of day.

This extreme uncertainty and high upfront cost is a recipe for disaster: many companies will invest a lot of money in data science projects, and see nothing from them. This makes the executives feel defrauded, and the data scientists disappointed in themselves. We must do better!

In the lean data science framework, we want to reduce our uncertainty as quickly as possible through validated learning. In the Lean Data Science world, we do that by:

- Measuring business outcomes, not model performance

- Shipping early and often

- Embracing failure and accepting good enough

By standing atop these three pillars, we can deliver more value to our business with more uncertainty — not in any one project, but across our entire portfolio of data science efforts.

Measure business outcomes, not model performance

The success of a data science project depends on the business outcome it inflects, not the ROC. When evaluating a new data science endeavor, it’s critical to measure how the product impacts actual business KPIs.

It’s very possible to build a model that has great accuracy, but for any number of reasons doesn’t actually positively impact the business. Maybe you can predict customer churn really well, but if your intervention to actually prevent that churn doesn’t work, then having more predictive accuracy doesn’t get you anything!

The top-line measure for any AI project should always be a business metric, never a technical metric. Technical metrics can help us to understand where improvements might lie, or where something might have gone wrong, but we must never lose sight of the business metric. At root, the technical metric might impact the business metric, but we have to keep in mind that the technical metrics mean nothing if it doesn’t move the needle for the business metric.

Sometimes, this is easier said than done. When there is lots of time and noise between the model output and the business metric that’s being affected, it can be tempting for data scientists to just focus on the things we can control and forget about everything else. Metrics like “customer retention” can often feel this way because there’s a very long lead time to see if a customer eventually churns, and there can be lots of other things that might impact that decision along the way. However, we should still not be complacent! By putting together a robust measuring plan in advance and making use of proxy metrics we can still make sure that we’re being disciplined in how we measure the impact of our models.

Ship Early and Often

We want to know as quickly as possible if our data science project is actually going to inflect the business metric we care about, so that means we need to get to the point of having a usable and measurable product as quickly as possible.

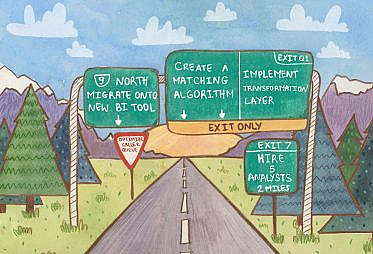

For our data science project that means we want to define what the Minimum Viable Product (MVP) is — what that means is that we want to strip away all potential features, all potential nice-to-haves, and try to get to the point of testability as quickly as possible. This is a great place to get creative:

- Instead of deploying a machine-learning model with an API, can we deploy a scrappy version with some weights hard-coded into the javascript?

- Instead of doing any modeling at all, can we use a rule of thumb just to test the product intervention and make sure our guess about customer behavior is right?

- What is the simplest possible type of model that we think might work?

The anti-goal is spending weeks or months fine-tuning a model that was never going to work in the first place. This is a grave danger for data science teams: spending weeks or months in the ivory tower optimizing for precision and recall only to deploy their model and watch it crash and burn when it goes live for customers.

We want to get a first version of our product out into the world as quickly as possible so that we can measure it. We want to know sooner rather than later whether or not our beliefs and assumptions about the potential business impact will hold and we want to know if the project is worth additional ongoing investment.

Of course, like the good scientists we are, we should be following the scientific method and making these hypotheses explicit before going out in the world and just running experiments willy-nilly. Building the habit of writing down explicit hypotheses about how our model is going to impact business metrics, and then being disciplined about testing those hypotheses can help us make sure that we’re learning as much as we can from our efforts.

Embrace failure and accept good enough

If we’re moving quickly and testing our product aggressively, many of those products will fail. Most of them, even. That can feel very scary — admitting failure is hard, but if we want to maximize the impact that we can have as data scientists, we have to embrace failure.

If we are evaluating our models based on a business metric and that metric doesn’t improve, we should be prepared to drop that project and move on to another. We should make this decision unemotionally, and, critically, we should make sure that we communicate the riskiness of our endeavors to our stakeholders so that failure is seen as a learning and not as a reason to defund the program. Failure is a necessary part of a data science program, and the more and faster we fail the faster we will strike onto the product that truly unlocks step-change value for our business. One way to accomplish this is to communicate hypotheses (and note that they’re hypotheses!) up front, and then make sure to always broadcast the learnings from a project to a wide group of failure, even if the learnings are “this didn’t work.”

In addition to embracing failure, we should always be ready to accept “good enough” and be ready to move on quickly. We should recognize that 80% of the value in a Data Science project will come from the first pass at a model — if that works and we deploy it, we should be out looking for the next most valuable project, which probably isn’t tuning that model to make it marginally better.

Often, we can get more value out of three data products based on logistic regression than we can from one that uses the newest deep-learning algorithm. We should evaluate our investments of additional effort based on the expected business impact — if tweaking the model to get 2% improved recall isn’t going to have a large business impact, we should move on to the next task.

Unfortunately, some data scientists can end up feeling a bit disappointed when they hear this — they want to be working with the shiny new models and feel like they’re doing cutting-edge work. I would counsel these people to think hard about what it is they really want — if what they want is to do research, then maybe working in industry – especially an early-stage data science project or program that hasn’t yet demonstrated business value – isn’t the right place for them. If what they want is to have an impact (and reap the professional rewards that come with that) then they should recognize that that means prioritizing what is most impactful over what may be the most interesting.

Conclusion

We want to maximize business value from our highly uncertain products. We can do that by taking a disciplined approach to validated learning in our data products. As long as we evaluate our work based on expected business impact and we are prepared to move on from projects that no longer seem valuable, we can increase the total amount of value our teams deliver for our organization

In order to deliver on the promise of the data science revolution, we must be lean.